(Solved): Question 1 Read Chapter 4 of Nilssons book (which you downloaded for Assignment 1). Take specia ...

Question 1 Read Chapter 4 of Nilsson’s book (which you downloaded for Assignment 1). Take special note of section 4.1 and its discussion of decision boundaries and their polarity and the concept of the neural network weight-space. The terms TLU and Perceptron refer to the same construct here (i.e. a neuron with weights and a threshold activation function). Similarly, the terms hyperplane, decision boundary, and decision surface refer to the same concept, which linearly divides a space into two sub-spaces. The space can have any number of dimensions. In a 2-dimensional space the decision boundary is a straight line. There is a direct mapping between the decision boundary(s) and the weights of the neural network. Go to: https://www.thomascountz.com/2018/04/13/calculate-decision-boundary-of-perceptron. This shows you how to draw the decision boundary for a Perceptron using the known weights. We simply use linear algebra of a line. You can also look at https://www.thomascountz.com/2018/03/23/perceptrons-in-neural-networks. Question 1(a) Consider the Perceptron in Figure 1. The bias and weights are shown in the figure. It uses the threshold activation function:

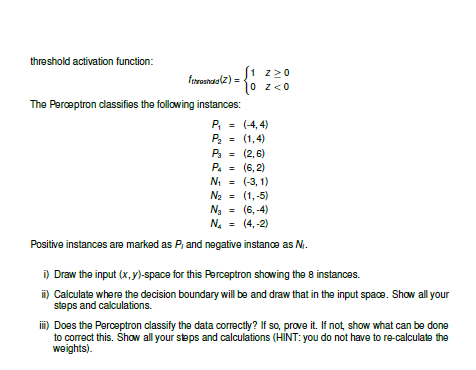

f_(trrashadd )(z)={(1,z>=0),(0,z<0):}The Perceptron classifies the following instances:

P_(1)=(-4,4)

P_(2)=(1,4)

P_(3)=(2,6)

P_(4)=(6,2)

N_(1)=(-3,1)

N_(2)=(1,-5)

N_(3)=(6,-4)

N_(4)=(4,-2)Positive instances are marked as

P_(l)and negative instance as

N_(I). i) Draw the input

(x,y)-space for this Perceptron showing the 8 instances. ii) Calculate where the decision boundary will be and draw that in the input space. Show all your steps and calculations. iii) Does the Perceptron classify the data correctly? If so, prove it. If not, show what can be done to correct this. Show all your steps and calculations (HINT: you do not have to re-calculate the weights).threshold activation function: fthreshold (z) = ( 1 z 0 0 z < 0 The Perceptron classifies the following instances: P1 = (-4, 4) P2 = (1, 4) P3 = (2, 6) P4 = (6, 2) N1 = (-3, 1) N2 = (1, -5) N3 = (6, -4) N4 = (4, -2) Positive instances are marked as Pi and negative instance as Ni . i) Draw the input (x, y)-space for this Perceptron showing the 8 instances. ii) Calculate where the decision boundary will be and draw that in the input space. Show all your steps and calculations. iii) Does the Perceptron classify the data correctly? If so, prove it. If not, show what can be done to correct this. Show all your steps and calculations (HINT: you do not have to re-calculate the weights).